Hybrid Logical Clocks: Having your cake and eating it, too

Part 4: How reality forces us to bring time back

We started this series by looking at how physical time breaks down.

Clocks drift, they jump, and they lie. Then we replaced them with causality, counters, and ancestry.

We tried to get rid of time entirely.

We couldn't.

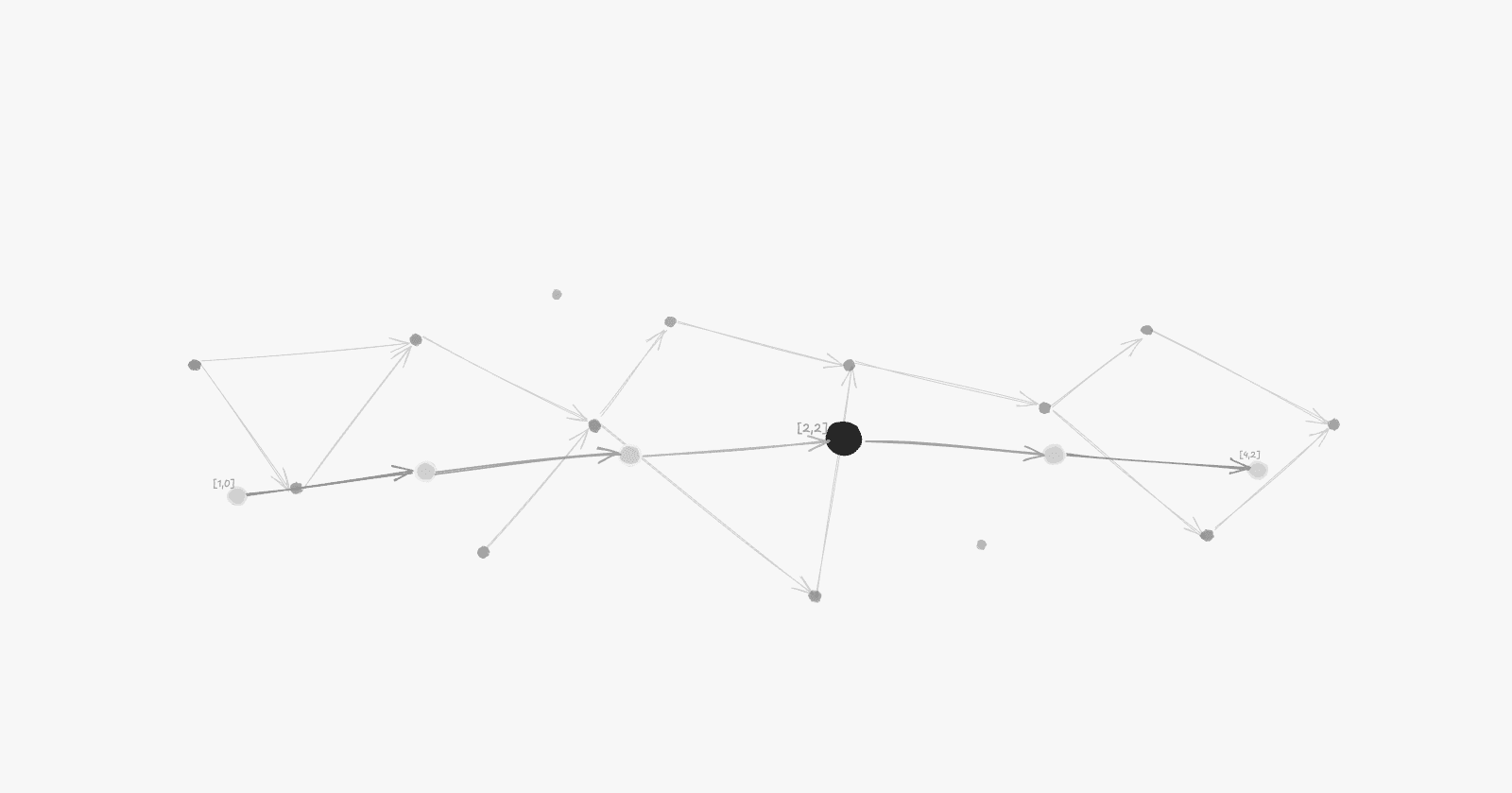

Lamport clocks preserve causality, but a Lamport timestamp of 52 tells you nothing about whether something happened five seconds ago or last Tuesday. It doesn’t help you debug when a customer asks why their transaction failed a few minutes ago.

Vector clocks track ancestry, but they don't help a bank auditor who needs to know exactly when a million euros left an account.

Logical clocks are disconnected from the real world. And in real systems, that matters.

Users care about time. Audits care about time. Expiration logic cares about time.

Physical clocks approximate real time but break causality. Logical clocks preserve causality but ignore the real world.

Hybrid Logical Clocks (HLC) exist because we need both.

The core idea

An HLC timestamp is actually two components combined:

(physical_time, logical_counter)

For a long time, this felt like one of those distributed systems problems that only existed on whiteboards. At least to me.

Then I saw a system clock jump backwards in production, and suddenly the whole thing stops feeling theoretical.

Normally, you know what day it is because you check the calendar. But after long flights, illness, night shifts, jet lag, or a chaotic week, the days blur.

You stop trusting the wall clock directly. Instead, you reconstruct order from anchors: you know you went to the gym after salsa, you know you called your mother before the train ride, you know you moved after the breakup, and you know this happened after Amsterdam because you already had the new job.

You don’t stop to repair your sense of time or fix the calendar on the wall. You let real-world days lead when they are clear, but the moment you lose your bearings, you fall back on what must still be true about sequence: the gym had to come after salsa.

That’s the relationship inside a Hybrid Logical Clock.

The Physical Part is the system's best guess of current wall-clock time. The Logical Part is a safety net, a counter that activates only when the physical clock fails.

Think of it as: “What time is it?” + “What must still be true about order?”

The physical clock leads when it can. The logical counter takes over when it can't.

It doesn't try to fix the clock. It just makes sure time never moves backwards.

How it handles the lies

Remember the physical clock from Part 1, the one that jumps, drifts, and stands still?

If the physical clock moves forward, the HLC follows it and resets the logical counter to zero. If it jumps backwards, the HLC ignores it and increments the counter instead. If it stands still, the HLC keeps moving forward logically.

Time always moves forward. Causality is always preserved. Even when the underlying clock is lying.

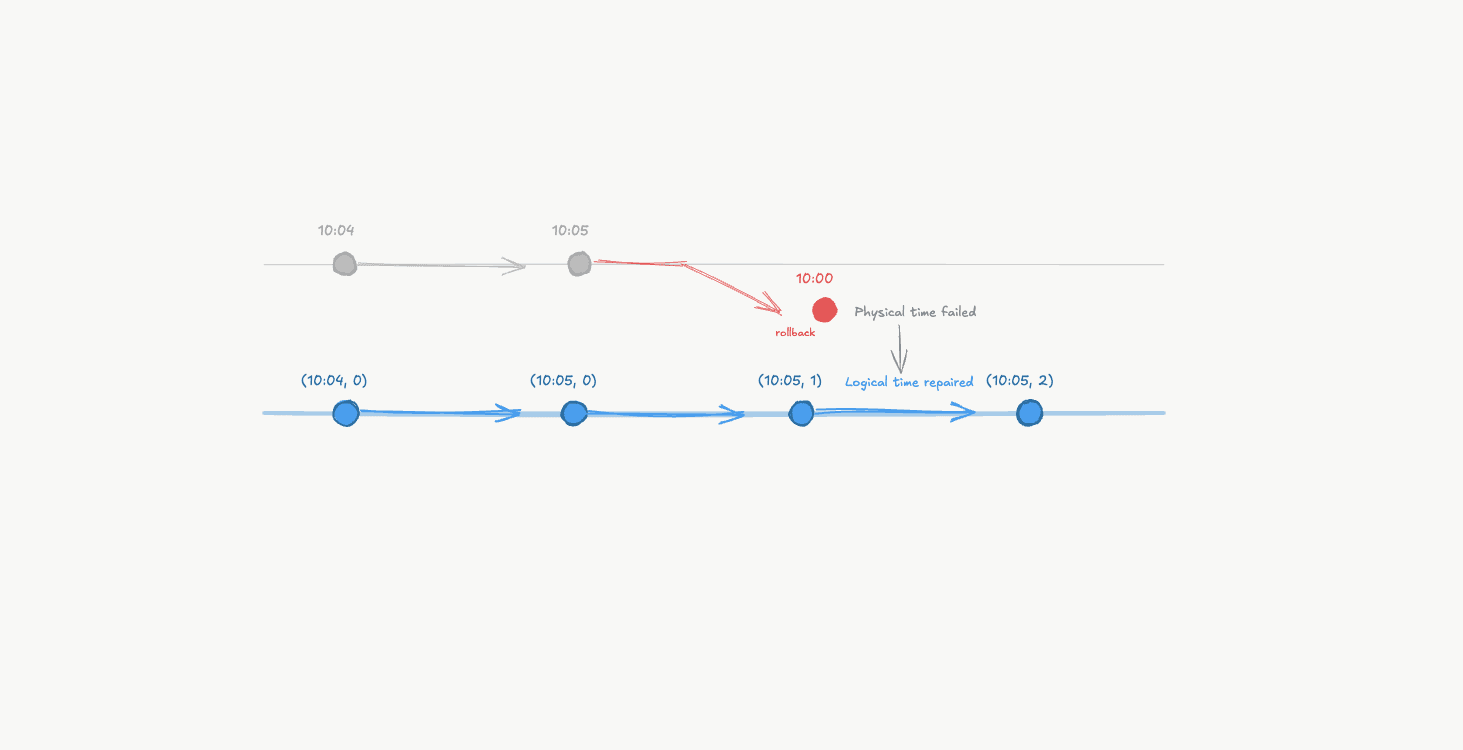

How It Works: Physical Time Leads, Logical Time Repairs

This is the central rule of the Hybrid Logical Clock. We treat the physical clock as our first choice, but we keep a logical counter as our fallback. The logical component only exists to catch the physical clock when it trips.

The Local Rule: Keeping the Line

Every time a machine does something, writes a row, sends a packet, it checks the time.

If the clock moved forward: use the new time and reset the counter to zero. The world still makes sense.

If the clock stalled or jumped backward: ignore the jump. Keep the last known high time and increment the logical counter instead.

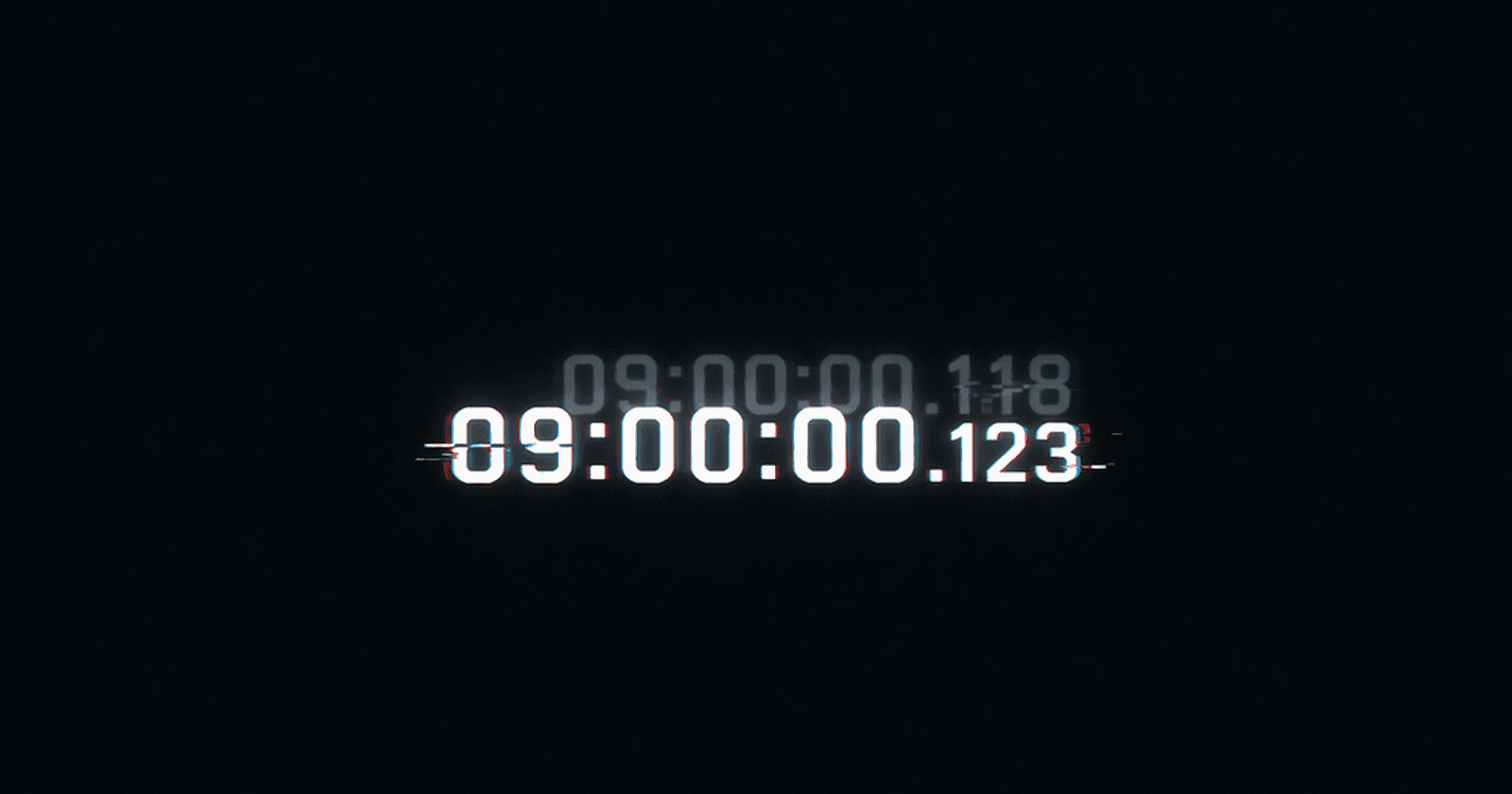

The Concrete Lie: Seeing the Counter Kick In

Imagine two events on Node A.

Event 1: The physical clock says 10:05. HLC: (10:05, 0).

The Glitch: The clock jumps back to 10:00.

Event 2: Instead of letting the system travel back in time, the HLC stays at 10:05 and increments the counter. HLC: (10:05, 1).

To a human, both events happened at 10:05. To the machine, that +1 is the evidence that Event 2 definitely followed Event 1, despite what the broken clock says.

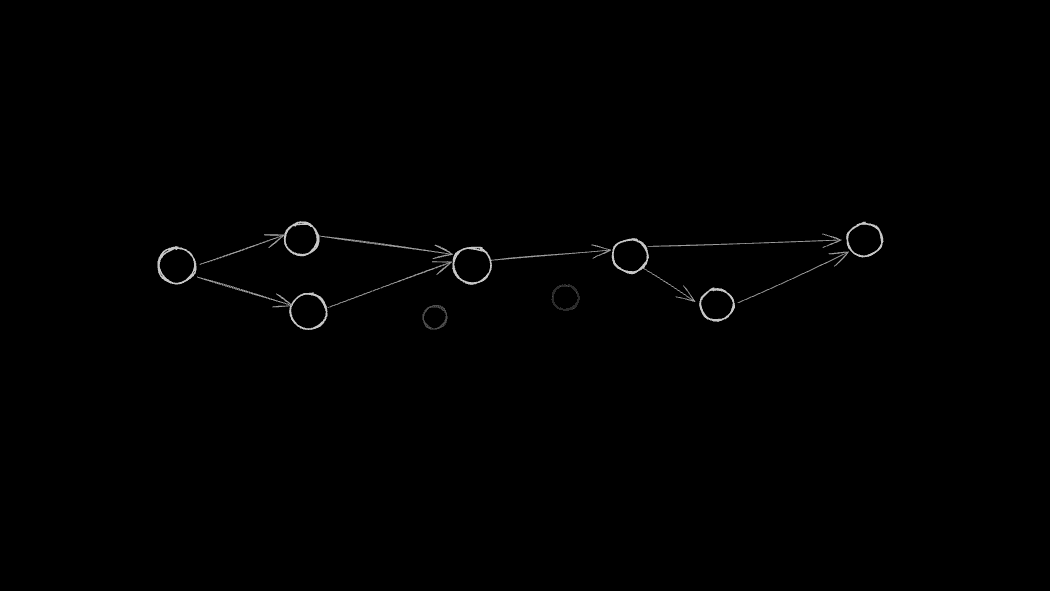

The Message Rule: Merging Histories

When two machines talk, they exchange their worldviews. They compare HLCs and adopt the highest time either has seen.

Node A is at (10:05, 1). Node B's local clock is lagging at 10:03.

When Node B receives Node A's message, it doesn't stay in the past. It jumps its physical time to 10:05 and increments the counter to 2 . This signals that this event came after Node A's last known event.

The exact mechanics matter less than the principle: use the physical clock whenever possible so timestamps make sense to humans. Use the counter to ensure causality is never violated.

The logical counter only kicks in when physical time alone would break it. It's the safety net that keeps a lying clock from breaking causality.

What This Achieves

In distributed systems, you usually have to pick your poison.

Lamport clocks gave us order, but lost the real world. Vector clocks gave us the full causal picture, but grew into a metadata problem that scales badly. Physical clocks gave us the time of day, but couldn't be trusted.

Hybrid Logical Clocks are an attempt to hold onto the useful parts of all three.

For the first time in this entire series, we stop choosing between causality and clocks.

The timestamps still make sense to humans. They still roughly correspond to real time. But unlike vector clocks, the metadata never grows. Five nodes or five thousand, the size stays the same.

It’s still a compromise. But it’s a practical one.

Bounded Uncertainty

In Part 1, we said there is no global “now”.

That's still true. But systems can say something weaker: I don't know exactly what time it is, but I know I am within ε of the truth.

That ε (epsilon) is uncertainty.

Clock synchronisation protocols don't give you the exact time. They give you a range.

“The current time is between 10:00:01.000 and 10:00:01.007.” A small window where the system cannot be sure of the exact ordering.

Hybrid clocks don't pretend that the window doesn't exist. They operate inside it. They don't assume perfect time. They assume bounded error, and within that bound, they make sure causality is never violated.

But bounded doesn't mean zero.

That window is still there. And in some scenarios, it matters.

What happens when you need to be absolutely certain that Event A happened before Event B, and your clocks are sitting inside that 7ms window of doubt?

That's where the bound stops being enough.

TrueTime: Making Uncertainty Explicit

Most systems are built on a polite lie. They pick a point in the middle of their uncertainty window, slap a timestamp on it, and call it "Now." They pretend the doubt doesn't exist.

Google Spanner does the opposite.

Instead of returning a single timestamp, TrueTime returns an interval:

[Earliest, Latest]

It is not a guess. It’s a confession. The system is telling you: “The true time is somewhere in here, and I refuse to be more precise than the laws of physics allow.”

To make that window as small as possible, Google deployed atomic clocks and GPS receivers across its datacentres.

The goal wasn't to eliminate uncertainty. That’s impossible.

The goal was to shrink ε until it was manageable. Usually, that means under 7ms. Often, it’s under 1ms.

The smaller the window, the more useful the guarantee.

But the window never fully closes. There is always an interval, however tiny, where the system cannot safely determine the exact order of events.

What happens when a transaction falls inside that gap?

The Price of Truth: Commit Wait

You already know the answer.

You wait.

When a transaction's timestamp falls inside that uncertainty window, Spanner doesn't guess. It doesn't pick a side. It pauses. It holds that pause for the entire duration of the interval before it allows the transaction to commit.

That silence is Commit Wait.

It's not a bug. It's not a failure mode. That’s a system admitting it doesn’t trust its own clocks. It's a deliberate design decision that says: we would rather be slow than be wrong.

The cost is real. Every single commit has to wait for the uncertainty to collapse. You pay that tax in write latency on every transaction. In a system processing millions of operations, those milliseconds matter.

But by the time the system hands you a timestamp, the window has closed. The ordering is no longer a guess but a guarantee.

Google didn't fix time with atomic clocks and GPS. They just shrunk the uncertainty window until the wait became practical.

The Spectrum

Every system in this series is making the same trade-off and answering the same question: not how do we find certainty, but how do we act without it?

We stopped looking at the sun and started counting. Modern distributed systems are still trying to combine the two.

The further right you go, the stronger your ordering guarantees become. The more you pay.

That's the spectrum. It is not a ranking. It is a menu.

On the far left, we have systems that trust physical time directly. Cassandra's last-write-wins sits here. Fast, simple, and honest about what it is. If your clocks drift, data can be silently overwritten. But if approximate ordering is acceptable, it's often good enough.

In the middle are systems like CockroachDB and MongoDB, which use Hybrid Logical Clocks. They preserve causality and stay close to real time, but they still live with a window of doubt. They don't use any specialised hardware or atomic clocks. They are just software managing uncertainty carefully enough that most systems can tolerate it.

On the right are systems that refuse to guess. Google Spanner uses TrueTime to shrink uncertainty as much as possible, then Commit Wait delays transactions until that uncertainty has passed. It provides stronger guarantees, but you pay in hardware and write latency.

How do you choose?

One question: what is the cost of being wrong?

If a comment appears out of order on a social feed, the cost is negligible.

If two users cannot under any circumstances purchase the same asset on a global ledger, the cost is everything.

You choose based on what your business cannot afford to get wrong.

That answer determines where you sit on the spectrum.

The Culmination

We started this journey with a simple betrayal: Clocks lie.

We took time out of the equation completely, only to reintroduce it later on our own terms. Physical time failed us. We replaced it with causality, only to watch Lamport clocks preserve order but lose concurrency. We recovered concurrency with vector clocks, just to realise we had abandoned the real world. We saw hybrid clocks carefully marry the two, and watched TrueTime turn uncertainty into an honest confession.

The solution was never perfect clocks. It was building systems that could survive imperfect ones.

And that turns out to be a much older problem than distributed systems.

Every field that has to act under uncertainty, medicine, law, science, navigation, has arrived at the same answer: you don't wait for certainty. You build something honest enough to work without it.

Distributed systems just made that problem visible in nanoseconds.